I recently watched a video that made me think. An Android developer, with zero design training, used Claude Code and the Figma MCP to generate a full set of mobile app designs from a markdown requirements document. In about an hour. He then compared the result to what a professional design agency had produced from the same brief, and the AI designs were… surprisingly close.

The video is titled “We don’t need designers anymore.” And honestly, watching it, I understood the impulse. I’ve had the mirror-image version of that thought myself, watching AI write surprisingly functional code in domains I know nothing about. Maybe I don’t need an engineer for this.

It’s worth noting that the video author sells courses and mentorship directed at mobile developers, and his YouTube channel is part of that marketing. That doesn’t make his experience invalid, but it does mean his incentives align with a particular narrative: that developers can now do it all themselves. A bold title like “we don’t need designers anymore” is great for engagement, and a single anecdotal comparison between one agency’s output and one AI session makes for a compelling video. What it doesn’t make for is a complete picture.

I’m not writing this to bash the video. I’m writing it because I think the subtleties he doesn’t cover are exactly the ones that matter most, and because I want to think critically about where this industry is actually headed, not just where the hype says it’s going.

That feeling of “I can do it all myself” is what I want to talk about. Because I think it’s both real and deeply misleading.

What AI actually changed about creative work

Let me be clear: what AI tools have done to creative and technical work is genuinely remarkable. Someone who openly admits his “design skills are garbage” produced a set of Figma screens that any designer would recognize as real work. Not a sketch, not a wireframe, but something with a proper design system, typographic hierarchy, and component consistency.

That simply didn’t exist two years ago, and it’s worth celebrating.

The same thing is happening on the engineering side. I’ve seen designers with no engineering background ship working React components, deploy basic backends, and write scripts that genuinely solve real problems. Things that would have required months of learning or a development budget now take an afternoon.

More people can build more things, and that’s generally a good thing.

The confidence trap

Here’s where it gets tricky, though. AI doesn’t just lower the barrier to producing work. It also lowers the barrier to being confident about that work. You generate something that looks polished and complete, and because you can’t see the flaws you don’t know to look for, you assume it’s good. The output looks professional, so you feel like a professional.

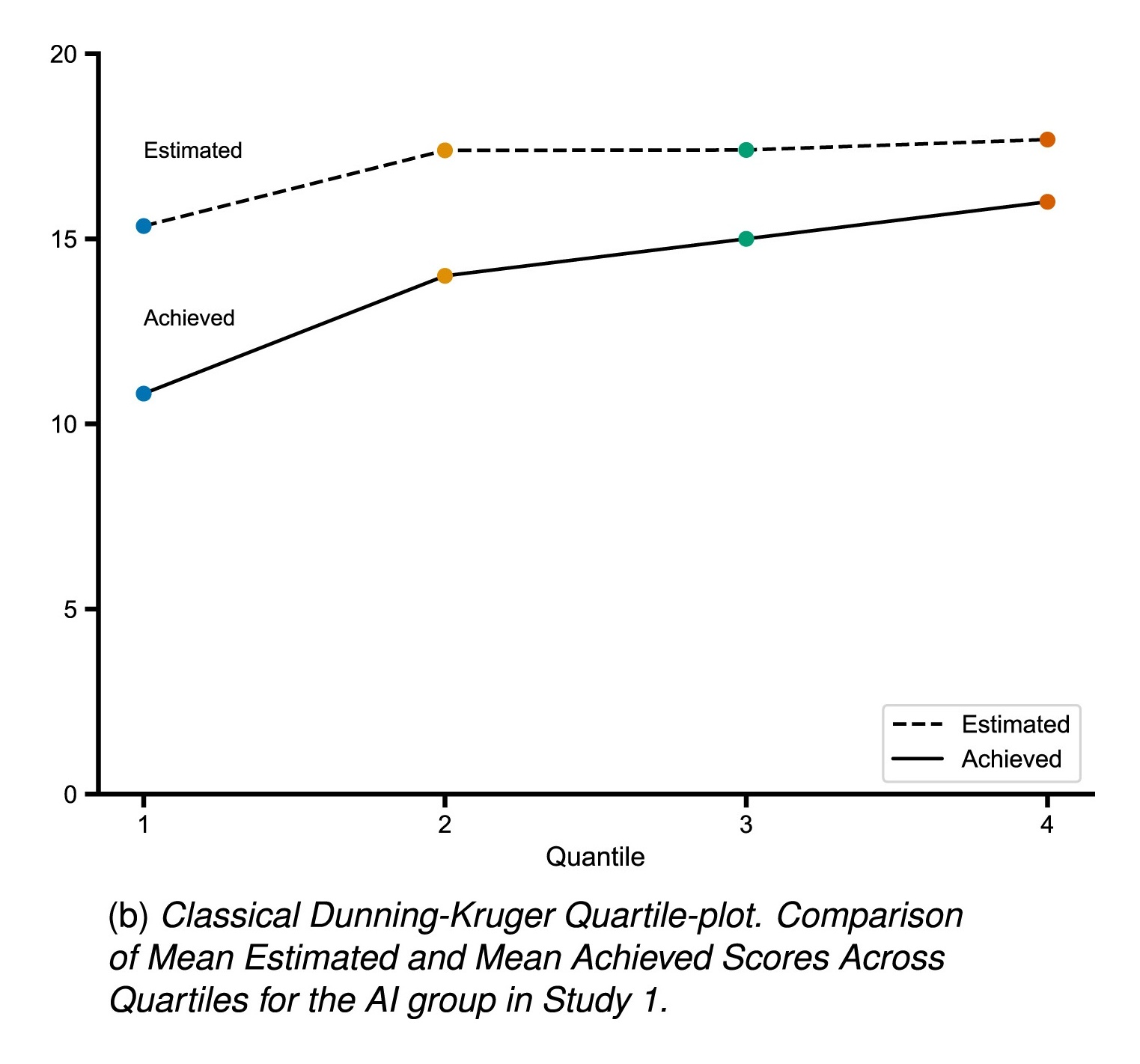

This isn’t new. Beginners have always overestimated their abilities. It’s a well-documented cognitive bias. But a recent study published in Computers in Human Behavior found something worth paying attention to: when people used AI to solve logical reasoning problems, their actual performance improved by about 3 points, but they overestimated their performance by 4 points. The classic Dunning-Kruger pattern, where low performers overestimate the most, disappeared entirely. With AI, everyone started overestimating. And perhaps most counterintuitively, higher AI literacy correlated with worse self-assessment accuracy, not better. The more comfortable you are with AI tools, the more likely you are to mistake the tool’s output for your own understanding.

The gap between “this looks done” and “this actually is done” used to be much more visible, because the raw output of a beginner was much rawer. A first-year design student’s mockup looked like a first-year design student’s mockup. Now it can look like something a mid-level designer produced on a Tuesday afternoon.

That’s where things get dangerous, because the beginner and the mid-level designer are now producing outputs that are visually indistinguishable, even though they are not the same. The beginner doesn’t know why certain decisions were made. They don’t know what was sacrificed. They can’t evaluate whether the navigation structure will hold up as the product grows, whether the color choices work across dark mode, or whether the information hierarchy will still make sense when the UI is populated with real data instead of placeholder text.

The AI doesn’t know those things either. It optimizes for looking right.

I see this in my own work. I can prompt an AI to generate code that compiles, runs, and even passes basic tests. It looks correct. But I lack the deep engineering intuition to evaluate whether it’ll hold up under load, whether the architecture will scale, whether there are subtle race conditions hiding in the concurrency model. A senior engineer would spot those issues in minutes. I wouldn’t know they existed until something broke in production.

Why “good enough” has limits

Going back to the video: when the developer compared his AI-generated designs to the agency’s work, he noticed real differences. The agency’s step counter component looked better. Their dashboard was more considered. He spotted a UX mistake in the AI output, a destructive action (“reset today’s steps”) buried in the navigation drawer where users wouldn’t expect it and might trigger it by accident. He caught it because he’s a developer who has thought about interaction patterns. A complete beginner might not have.

But here’s the thing that the video glosses over: if he had hired a better agency, the gap would have been much wider. He was comparing his AI output to one specific agency’s work, and found them roughly equivalent. Professional design exists on a spectrum though, and a really good product design team, one that does research, interaction design, and systems thinking, would have produced something meaningfully different. It would be harder to compare in a screenshot, but the gap in the details that matter over time would be significant.

This cuts both ways. A vibe-coded backend might handle your 50 beta users just fine. But when you have 50,000 users hitting that endpoint concurrently, the architectural choices that felt invisible at small scale become the difference between a product that works and one that falls over.

AI raised the floor. It didn’t raise the ceiling.

The pattern we keep repeating

Every few years there’s a wave of tools that makes it easier for one discipline to do another discipline’s job. Squarespace let non-designers build websites. Webflow made it easier. No-code tools let non-engineers build apps. Each time, someone writes a piece about how we don’t need [fill in the blank] anymore.

And each time, the professionals in the “deprecated” discipline go through a rough patch, only to find that demand for them actually went up. Because now more people were building things, and eventually those things needed to get serious. The Squarespace websites needed to scale. The no-code apps hit their limits. The vibe-coded systems ran into problems that required real expertise to solve.

Designing or developing a full product with AI help is perfectly fine for simple or low-stakes work. A personal project. A quick prototype to test an idea. An internal tool used by three people. But when things get serious, when real users depend on it, when the product needs to grow and be accessible and maintainable, you can’t rely on yourself to do things outside your expertise. Not because you’re not smart enough, but because expertise isn’t just about knowing how to do things. It’s about knowing which questions to ask.

I can vibe-code a feature. I won’t know what I don’t know until it breaks.

Why collaboration matters more now, not less

Here’s the part I actually find hopeful, though. The rise of AI tools has made the collaboration between designers and engineers more necessary, not less, but it has also given us a much better way to do it.

Think about what used to happen when a designer pushed for a subtle animation that an engineer saw as unnecessary polish. The designer couldn’t fully articulate the technical cost they were asking for, and the engineer couldn’t fully see why that “useless detail” would actually help users understand a state change and feel more confident in the interface. Both sides were right, but they were talking past each other.

AI can be a translator here. As a designer, I can now use AI to better understand the technical constraints behind a pushback, to see why something that looks simple in Figma might be genuinely hard to build and maintain. An engineer can use AI to explore why a design decision that seems cosmetic actually solves a real usability problem. Instead of each side guessing at the other’s reasoning, AI can help bridge that gap in understanding.

Using AI as a tutor and translator, to help designers and engineers work better with each other, may be far more valuable in the long run than the short-term rush we get from using it to vibe code and vibe design everything ourselves. The person who understands both sides well enough to ask the right questions and spot the right problems isn’t going to be replaced by AI. That’s a collaborator, and the best product teams I’ve worked with are full of them.

Things you can do right now

I don’t want to end this as a vague warning. Here are the things I try to keep in mind as a designer working alongside AI tools:

Develop taste in the adjacent discipline. You don’t need to be a developer to recognize when generated code is structurally messy. You don’t need to be a designer to know when a generated layout doesn’t scale. Learn enough about the other side to be a useful critic.

Treat AI output as a strong first draft, never a final answer. The developer in the video needed his own judgment to catch the navigation drawer mistake. That judgment was his, not the AI’s. Your job is to bring that judgment to whatever the AI produces.

Be honest about what’s at stake. For a side project? Fine, vibe code the whole thing. For something real users depend on? Bring in people who know what they’re doing. The cost of getting it wrong compounds over time.

Seek out the collaborations AI can’t replace. The conversation between a designer and an engineer who both deeply understand the product isn’t something you can prompt for. It produces decisions that are better than either person would make alone. Protect that.

Remember that the ceiling is still human-made. AI raised the floor, but the best products are still built by people who care deeply about what they’re making, who challenge each other, and who can recognize when something isn’t good enough yet.

These are uncertain times for everyone in creative and technical fields. I think the honest answer to “will AI replace me?” is: it depends on what you bring to your work beyond execution. If what you bring is mostly turning a specification into a file, translating a brief into a layout, implementing a ticket, then yes, that part is being automated. But if you bring judgment, curiosity, craft, and a genuine commitment to getting things right, you’re not going anywhere. You’re probably more valuable than you were two years ago.

The floor went up. Now we get to raise the ceiling.

Yes, I used AI to help me structure and refine this article.